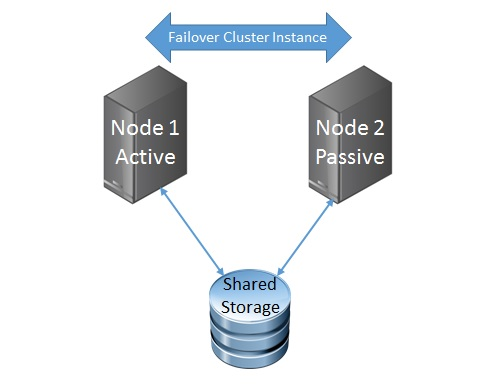

In Virtual SAN 6.1 (vSphere 6.0U1) it was announced that there is full support for Oracle RAC, Microsoft Exchange DAG and Microsoft SQL AAG, I quite often get asked about the traditional form of Windows Clustering technology which is often referred to as Failover Cluster Instance (FCI).? In the days of Windows 2000/2003 this was called Microsoft Cluster Service (MSCS) and from Windows 2008 onwards it became known as Windows Failover Clustering,  typically this would consist of 1 Active node and 1 or more Passive nodes using a shared storage solution as per the image on the left.

typically this would consist of 1 Active node and 1 or more Passive nodes using a shared storage solution as per the image on the left.

When creating a failover cluster instance in a virtualised environment on vSphere the concept of shared storage is still a requirement, this could be achieved in one of two ways:

Shared Raw Device Mappings (RDM) – Where one or more physical LUNs are presented as a Raw Device to the cluster virtual machines either in Physical or Virtual Compatibility Mode, the Guest OS would write a file system directly to the LUNs.

Shared Virtual Machine Disks (VMDK) Where one or more VMDKs are presented to the cluster virtual machines.

With both MSCS and FCI all the virtual machines involved in the cluster would need to have their Virtual SCSI Adapter (where the shared RDMs or VMDKs are being attached) set to SCSI BUS Sharing in order to allow both VMs to access the same underlying disk, this would also allow the SCSI-2 (MSCS) and SCSI-3 (FCI) reservations to be placed on the disks by the clustering mechanism.

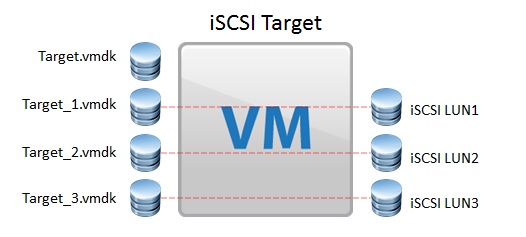

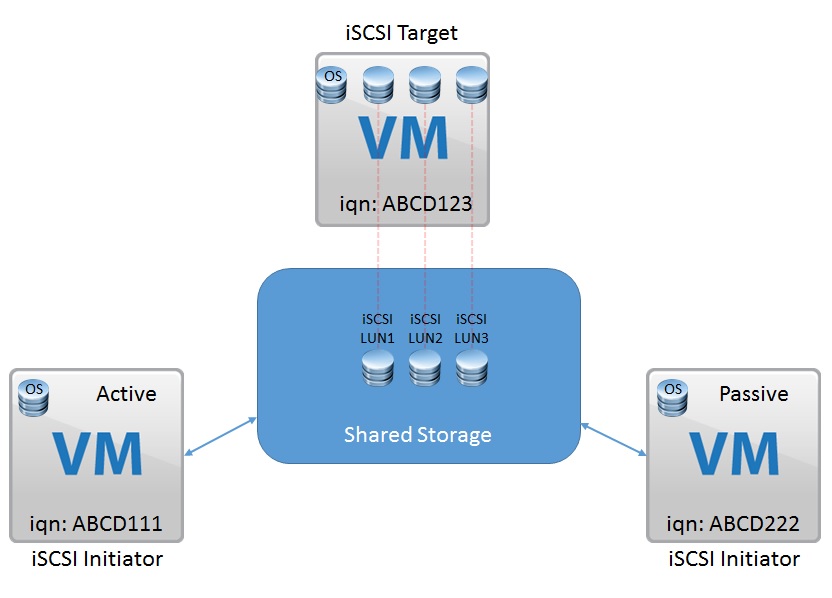

Now since Virtual SAN is not a distributed file system, and a disk object could be placed across multiple disks and hosts within the Virtual SAN cluster, the SCSI-2 and SCSI-3 reservation mechanism is non-existent, so the ability to use SCSI BUS Sharing will not work, remember each capacity disk in Virtual SAN is an individual contributor to the overall storage.? With Virtual SAN it is possible to create an MSCS/FCI by using the in guest Software iSCSI, to accomplish this we need the cluster nodes as well as an iSCSI target, the iSCSI Target will have VMDKs assigned to it like so:

In the example to the right there are four VMDKs assigned, the first VMDK is the OS disk for the iSCSI Target and the other three disks are going to be used as the cluster shared disks, there is no need to set these disks to any type of SCSI BUS Sharing as the in guest iSCSI Target will manage these disks directly.? The Underlying VMDKs will still have a Virtual SAN Storage policy applied to them, so FTT=1 for example will create a mirror copy of the VMDKs somewhere else within the Virtual SAN Cluster.

The Cluster nodes themselves would be standard Windows 2003 / 2008 / 2012 virtual machines with the Software iSCSI Initiator enabled and used to access the LUNs being presented by the iSCSI Target, again since the in guest iSCSI will manage the LUNs directly there is no need to share VMDKs or RDMs using SCSI BUS Sharing on the virtual machine SCSI adapter, the Cluster nodes themselves can also reside on Virtual SAN, so you would typically end up with each of the cluster virtual machines accessing the shared storage via their in guest iSCSI initiator

Since each of the cluster nodes also reside on Virtual SAN, their OS disk can also have a storage policy defined, performing an MSCS / FCI this way does not infringe on any supportability issues as you are not performing anything you should not be from either a VMware or Microsoft perspective.? All the SCSI-2 / 3 Persistent reservations are handled within the iSCSI Target without hitting the Virtual SAN layer so providing the Guest OS for the iSCSI target supports this then there should be no issue and the MSCS/FCI should work perfectly, it is also worth noting that Fault Tolerance is supported on Virtual SAN 6.1, this could be used to protect the iSCSI Target VM compute against a host failure.

Since each of the cluster nodes also reside on Virtual SAN, their OS disk can also have a storage policy defined, performing an MSCS / FCI this way does not infringe on any supportability issues as you are not performing anything you should not be from either a VMware or Microsoft perspective.? All the SCSI-2 / 3 Persistent reservations are handled within the iSCSI Target without hitting the Virtual SAN layer so providing the Guest OS for the iSCSI target supports this then there should be no issue and the MSCS/FCI should work perfectly, it is also worth noting that Fault Tolerance is supported on Virtual SAN 6.1, this could be used to protect the iSCSI Target VM compute against a host failure.

In my testing I used a Ubuntu Virtual Machine as well as a Windows Storage Server OS as the iSCSI Target virtual machine and Windows 2003/2008/2012 as the Clustering virtual machines hosting a number of cluster resources such as Sharepoint, SQL and Exchange. At the time of writing this is not officially supported by VMware and should not be used for Production use.

As newer clustering technologies come along, I see the requirement for a failover cluster instance to disappear, this is already happening with Exchange DAG and SQL AAG as the nodes replicate between themselves which mitigates the need for a shared disk between the nodes

One question I do get asked is how we support Oracle RAC on Virtual SAN as that uses shared disks also?? The answer to that is unlike MSCS/FCI which uses SCSI BUS Sharing, Oracle RAC uses the Multi Writer option in a virtual machine, Oracle RAC has a distributed write capability and it handles the simultaneous writes from different nodes internally to avoid data loss.? For further information on setting up Oracle RAC on Virtual SAN, please use KB Article 2121181

“Now since Virtual SAN is not a distributed file system, and a disk object could be placed across multiple disks and hosts within the Virtual SAN cluster, the SCSI-2 and SCSI-3 reservation mechanism is non-existent” – how is this true considering the VM sees the VMDK as a physical disk (which could live on wooden sticks and moving balls for all it knows)?

Hi Peter

Because the disk objects are spread across multiple nodes (component distribution), there’s no concept to establish the SCSI-2 or SCSI-3 type reservation on all the components of an object, this would mean that a SCSI-3 persistent reserve would need to establish a reservation on a minimum of Mirror+Replica+Witness, and each of these components exist on different hosts in the cluster, this will get more spread out in all-flash erasure coding as RAID5 is spread across 4 hosts and RAID6 across 6 hosts. For SCSI-2/3 reservations, the controller has to be able to exclusively lock the disk for write access and this is not possible in the distributed architecture. With the in guest ISCSI, the iSCSI controller is the locking adapter.

Simon

Hi Mr vSan

Do you have a step by step guide for mscs on vSan?

I am not understanding how you use in host iscsi to map to a vsan object

I will appreciate it

thanks

Dan

Hi Dan

Basically you would be using the in Guest iSCSI in the MSCS Cluster nodes and the iSCSI Target(s) would exist on the VM that you added the VMDKs to

Simon

HI MrVSAN

You will have a guide on how to install and configure MSCS Cluster 2003 with IIS into vSAN???

best regards

Christian

Hi Christian

Installing IIS on a 2003 MSCS should be no different from installing it on a Physical MSCS Cluster, providing MSCS uses iSCSI to access the storage LUNs provided by the appliance

Hi MrVSAN,

Any news on VMware supporting use of iSCSI target VM on VSAN for MSFCI with shared storage? More importantly, why can’t VSAN iSCSI targeting be done at the ESXi host level?

Thanks

Yuriy

Hi Yuriy

This is a bit of a moot point now because vSAN 6.7 supports creating iSCSI Targets to be consumed by Physical and Virtual MSCS/WSFC nodes